Talent acquisition leaders worldwide are navigating a real tension: AI tools promise speed and consistency, yet candidates increasingly report feeling processed rather than considered. The assumption that AI inherently dehumanizes hiring is widespread, but it is not entirely accurate. The real risk is not AI itself. It is how AI is implemented without a clear strategy for preserving the human moments that matter most. This guide addresses that gap directly, offering frameworks, data, and member-tested techniques for building recruitment processes where AI amplifies rather than diminishes authentic candidate engagement.

Table of Contents

- Why authenticity matters more in the era of AI

- AI's strengths and blind spots in candidate engagement

- Designing AI-powered processes that foster authenticity

- Real-world success stories and pitfalls to avoid

- Our take: Authenticity isn't optional in AI hiring

- Next steps: Connect and build authentic, AI-enhanced hiring

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Balance automation with empathy | AI streamlines hiring but must be paired with human touch to support authentic candidate connections. |

| Monitor for hidden bias | Recruiters should regularly check AI tools for bias and fairness in their processes. |

| Adopt a hybrid approach | Hybrid models combine AI efficiency with human judgment for engaging and equitable hiring experiences. |

| Prioritize candidate feedback | Looping in candidate insights helps refine both AI and recruiter interactions for authenticity and trust. |

| Invest in continuous learning | Peer mentoring and community connections enable talent leaders to adjust to evolving technologies while maintaining authenticity. |

Why authenticity matters more in the era of AI

Authenticity in candidate experience refers to the degree to which candidates feel they are being seen, assessed fairly, and communicated with honestly throughout the hiring process. In global talent acquisition, this is not a soft concept. It directly affects conversion rates, offer acceptance, and long-term employer brand perception. When candidates feel processed by an algorithm rather than engaged by an organization, the downstream effects show up in declined offers, negative reviews, and reduced referral pipelines.

The concern is well-founded. Forty-two percent trust AI less in hiring decisions, according to research cited by Harvard Business Review, which also found that AI has broadly contributed to a perceived decline in the quality and fairness of hiring interactions. This is a significant trust deficit that talent leaders cannot afford to ignore. The growth of AI's impact on job searches has also shifted candidate behavior, with applicants now actively optimizing their materials for algorithmic screening, sometimes at the expense of genuine representation.

Authentic candidate experiences produce measurable outcomes:

- Higher engagement rates throughout the recruitment funnel

- Stronger employer brand recognition among passive candidates

- Better long-term retention among new hires who accepted offers based on honest process expectations

- Greater willingness to reapply or refer others after a rejection

- Reduced candidate drop-off during multi-stage assessment processes

"AI has, in many documented cases, made hiring worse by eroding the trust candidates place in organizations. The technology is not neutral in its effects on candidate perception." Source: Harvard Business Review, 2026

Understanding AI vs. human decision making in selection contexts is a foundational step. Talent leaders need to know precisely where algorithmic judgment adds value and where it introduces friction or inequity. The way candidate perceptions of AI hiring have shifted in recent years makes it clear that transparency and intentional design are no longer optional.

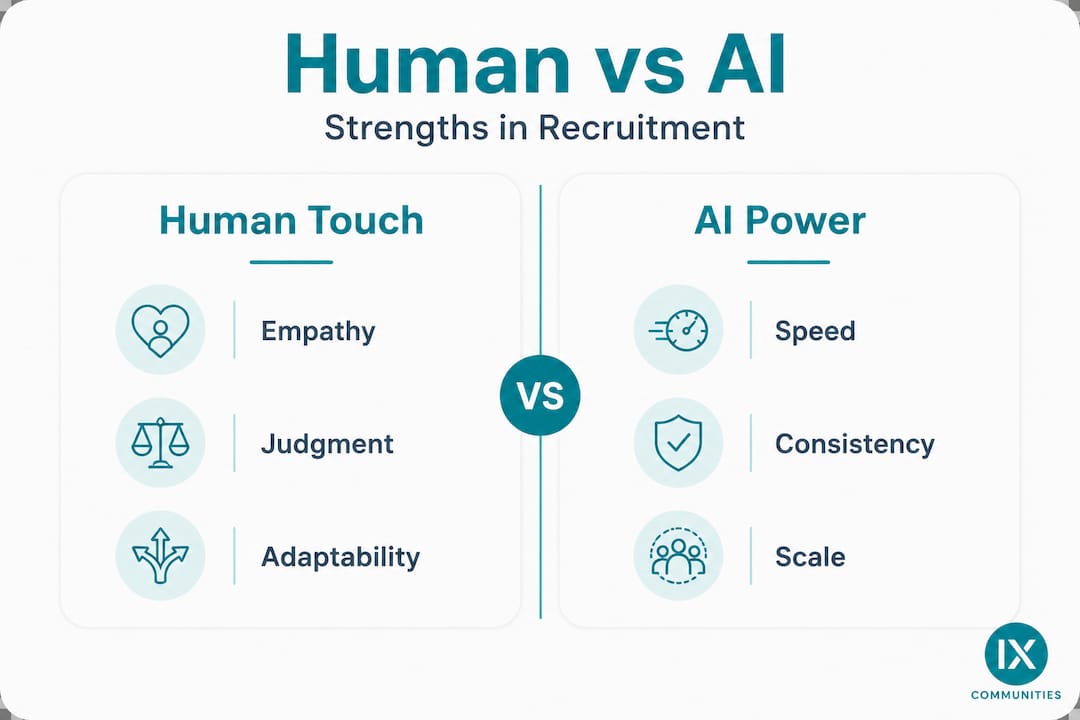

AI's strengths and blind spots in candidate engagement

After understanding why authenticity is crucial, it is important to analyze exactly where AI may help or hinder it, so you can design your process more effectively.

AI brings real capabilities to high-volume hiring. It reduces the time burden on recruiter teams, creates more consistent candidate communication across time zones and languages, and, when properly calibrated, can reduce certain forms of human bias in initial screening. For global firms managing thousands of applications per quarter, these are not trivial gains.

However, AI carries equally real limitations. It cannot read emotional cues. It struggles with atypical candidates whose non-linear career paths do not fit the training data. And it can amplify historical biases if the underlying data reflects past inequities. Research confirms that AI reduces bias and time in screening, while simultaneously eroding authenticity and trust when it dominates candidate-facing interactions.

Human vs. AI in candidate experience

| Dimension | Human strength | AI strength | Human blind spot | AI blind spot |

|---|---|---|---|---|

| Empathy and emotional cues | High | Low | Inconsistency across recruiters | Cannot detect distress or hesitation |

| Communication consistency | Variable | High | Time zone limitations | Lacks contextual nuance |

| Bias mitigation | Dependent on training | Possible if audited | Affinity bias, halo effect | Amplifies historical data bias |

| Handling edge cases | Strong | Weak | May penalize non-traditional paths | Rejects atypical profiles |

| Speed and scalability | Limited | High | Bottlenecks in high-volume periods | No judgment on fit beyond criteria |

The candidate touchpoints that most benefit from human ownership include:

- Initial outreach and relationship-building for senior or specialized roles

- Interviews involving complex behavioral or situational judgment

- Offer negotiation and candidate-specific flexibility conversations

- Post-rejection feedback, especially for candidates who invested significant time

- Situations involving a candidate disclosing a disability or accommodation need

One area where member organizations have found particular value is addressing the challenge of candidate-side AI use during live interviews. Members have shared that video interview panels now regularly notice candidates pausing to read from off-screen scripts or giving unnaturally polished responses that suggest real-time AI text generation. Effective countermeasures include asking spontaneous follow-up questions that require genuine elaboration, varying question order unpredictably, and asking for specific anecdotes with real-time detail verification such as requesting that candidates describe particular colleagues or outcomes in the moment. Reviewing AI-driven recruitment benchmarks from peer organizations can help calibrate where your process stands relative to industry norms.

Pro Tip: When pairing AI screening with human interviews, brief your interviewers on what the AI flagged and what it cannot assess. This allows interviewers to fill gaps intentionally rather than redundantly validating what the algorithm already scored. It also gives interviewers permission to probe areas the AI ranked lower, which is often where the most interesting candidate qualities live. For candidates optimizing their materials through AI tools, this kind of human probe is also your best quality check.

Designing AI-powered processes that foster authenticity

Having examined the pros and cons, let's focus on actionable strategies to implement an AI-driven selection process that still feels uniquely human.

Governance is the starting point. Every AI tool deployed in candidate-facing workflows should be subject to regular audits that check for disparate impact across demographic groups. Governance prevents bias amplification and is especially critical when AI tools are used for resume parsing, video interview analysis, or automated scoring. Governance also covers transparency: candidates should know when AI is evaluating them and what criteria are being applied.

Hybrid models are consistently identified as the most effective approach. Hybrid models yield the best outcomes, according to expert consensus, because they assign AI to high-volume, repetitive tasks while keeping humans responsible for judgment-intensive moments. This is not just about risk management. It is about designing a candidate experience where efficiency and engagement coexist.

Recommended ownership by touchpoint

| Hiring stage | Recommended owner | Rationale |

|---|---|---|

| Application intake and resume screening | AI | Volume and consistency |

| Initial candidate communication | AI with human review | Speed with quality check |

| Scheduling and logistics | AI | Reduces administrative friction |

| First-round behavioral interview | Human with AI notetaking | Relationship and empathy |

| Structured skills assessment | AI scored, human reviewed | Consistency and fairness |

| Final interview and offer | Human | Judgment, flexibility, and relationship |

| Post-process feedback | Human | Trust and brand protection |

Implementing candidate feedback loops is one of the most underused tactics in AI-enabled hiring. Here is a straightforward process:

- Send a short post-interview survey to all candidates, regardless of outcome

- Use AI to categorize and aggregate feedback themes at scale

- Review flagged themes quarterly with your recruitment operations team

- Update AI scoring criteria and process steps based on patterns identified

- Communicate process changes internally so recruiters understand what shifted and why

One specific challenge members have raised is communicating clearly to candidates that the organization uses AI for notetaking and assessment, while simultaneously asking candidates not to use AI assistance during the interview itself. This is a governance question as much as a communication one. Best practice is to include explicit language in the interview invitation, explain the rationale briefly (the organization wants to assess the candidate's own capabilities), and ensure that interviewers are equipped to handle candidates who ask follow-up questions about this policy.

Pro Tip: Use off-script, spontaneous questions during AI-assisted video interviews to maintain authenticity and security. Ask candidates to describe a specific moment from a past project in real time, including who else was present and what they said. This format is difficult to script in advance and nearly impossible to answer convincingly using real-time AI text generation. It also surfaces genuine candidate capability more reliably than structured questions alone. Integrating AI tools in hiring within a governance framework helps you scale this kind of intentional design. For broader technology selection guidance, the recruitment technology tools reference is a useful resource. Firms focused on inclusive design should also consult resources on diversity and AI in hiring to ensure their hybrid models account for equity at each stage.

Real-world success stories and pitfalls to avoid

With practical frameworks in hand, real-world examples can illustrate what actually works and what to avoid in the pursuit of authentic candidate experiences.

Organizations that have successfully adopted hybrid models share a consistent pattern: they invested in change management alongside technology. They trained hiring managers to understand what AI could and could not assess. They built transparent candidate-facing language about how AI was used. And they maintained a human point of contact at every stage where a candidate might have a substantive question or concern.

The pitfalls are equally consistent across sectors. Over-automated processes, where candidates interact only with chatbots and automated emails from application through final assessment, create a stark experience gap. Candidates report feeling invisible. Lack of transparency about AI use generates suspicion and, in some markets, legal exposure. Insufficient bias monitoring allows discriminatory patterns to compound over time, particularly in video interview AI tools that have documented problems with rating candidates from certain demographic backgrounds lower without valid job-related justification.

Key success factors in AI-enabled, authentic hiring include:

- Clear governance documentation for every AI tool in the recruiting stack

- Defined escalation paths when AI flags a candidate in ways that seem anomalous

- Training hiring managers on how to review and contextualize AI-generated assessments

- Transparent candidate communication about AI's role in the process

- Regular candidate experience audits using both survey data and drop-off analysis

- A named human contact point for any candidate with process concerns

"According to NACE research, 53% of candidates disagree that AI-driven hiring is equitable. Harvard Business Review notes a perceived decline in authenticity as AI becomes more prevalent in recruitment." Source: HBR, 2026

This equity perception gap has real consequences for AI in executive hiring, where candidates are evaluating the organization as much as the role. Senior candidates who experience an impersonal, fully automated screening process often conclude that the organization's culture does not match its stated values. Strong branding and candidate experience design directly offsets this risk by creating coherence between what candidates read about an employer and what they actually experience during the hiring process.

Our take: Authenticity isn't optional in AI hiring

Most organizations adopting AI in recruitment have over-indexed on efficiency metrics. Time to fill, cost per hire, and screening volume are easy to measure and improve with automation. What is harder to quantify is the quality of every candidate's experience, and so it often goes unmeasured until something breaks, such as a spike in offer declines, a wave of negative Glassdoor reviews, or a public complaint about discriminatory screening.

The organizations that get this right do not treat authenticity as a byproduct of a well-run AI deployment. They treat it as a design requirement. Every process decision is evaluated against a simple question: does this step make the candidate feel fairly assessed and honestly engaged? If the answer is unclear, a human touchpoint is added.

AI is a lever for scale. It should free up recruiter capacity to invest in the interactions that require judgment, empathy, and genuine conversation. When AI handles the administrative load, recruiters have more time to build real relationships with candidates, especially in competitive talent markets where a single conversation can determine whether a candidate accepts or declines.

Talent leaders also need to keep learning. The AI landscape in recruitment is changing faster than most organizations can update their governance frameworks. Peer mentorship for recruiters is one of the most practical mechanisms for staying current because it provides direct access to what peer organizations are actually doing, including what is working and what has backfired. Institutional knowledge shared in trusted peer environments moves faster and is more actionable than most published research.

The candidate of 2026 is sophisticated. They know when they are talking to a bot. They notice when feedback is templated. They compare their experience to what the organization publicly says about its values. Authenticity is not just a nice attribute in AI-powered hiring. It is a competitive differentiator.

Next steps: Connect and build authentic, AI-enhanced hiring

For talent leaders ready to take action, here is how you can tap into leading resources and networks to drive authentic, AI-enhanced recruitment.

The strategies in this article are most effective when grounded in real peer experience. IXCommunities provides the structured environment for that kind of learning. Through the Talent Leaders Peer Mentoring Program, senior professionals in global talent acquisition connect directly with peers managing the same challenges around AI adoption, candidate experience design, and governance.

Access to IX Communities Membership opens a secure environment where departments share frameworks, tools, and process templates that have already been tested in large-scale corporate settings. Members also access the Benchmark Surveys platform to compare their AI adoption and candidate experience metrics against peer organizations, giving them the data to make confident decisions about where to invest and where to pull back.

Frequently asked questions

How can AI improve authenticity in hiring without losing the human touch?

AI frees up recruiters to focus on personal interaction by handling routine tasks, but hybrid models keep judgment and empathy firmly in the human domain, which is where candidates most need it.

What risks should talent leaders watch for when using AI in recruitment?

Leaders should monitor for amplified biases, transparency gaps, and over-automation. Research shows 42% trust AI less in hiring, and more than half of candidates question whether AI-driven processes are equitable.

What practical steps promote authenticity in AI-driven hiring?

Implement regular audits for bias, integrate feedback loops from candidates, and blend human oversight into AI-powered touchpoints. Governance prevents bias amplification and keeps the process defensible and fair.

Can too much automation hurt employer branding?

Yes. Excessive automation makes candidates feel undervalued, and AI erodes authenticity and trust when it dominates candidate-facing interactions, which translates directly into negative employer brand perception over time.