AI adoption in leadership hiring is accelerating, yet governance frameworks often fail to keep pace. Adoption outpaces governance in large organizations, raising serious compliance and ethical risks for corporate leaders. Many Chief People Officers and Heads of Talent Acquisition operate under common misconceptions: that AI is inherently neutral, that transparency is automatic, or that existing HR policies are sufficient to govern algorithmic decisions. None of these assumptions hold under scrutiny. This article maps out the core concepts, practical mechanisms, and measurable benchmarks talent leaders need to build a sound AI governance strategy for executive and leadership hiring in 2026.

Table of Contents

- Understanding AI governance in leadership hiring

- Key governance mechanisms: How organizations ensure ethical AI

- Bias and edge cases: Addressing AI pitfalls in leadership candidate selection

- Benchmarks and maturity: Measuring governance and hiring outcomes

- What most organizations miss about AI governance in executive hiring

- Peer mentoring and resources to advance your AI leadership hiring strategy

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Governance first | Effective AI leadership hiring starts with strong governance frameworks to minimize bias and maximize transparency. |

| Mechanisms matter | Cross-functional oversight, bias testing, and human override processes are essential for ethical AI use. |

| Benchmarks for maturity | Use empirical data and outcome metrics to measure—and improve—your AI governance strategy. |

| Address edge cases | Test for hidden biases in AI models and proactively manage risks such as ordinal or prestige bias. |

| Leverage peer mentoring | Peer programs and benchmarking tools help talent leaders build smarter governance and hiring results. |

Understanding AI governance in leadership hiring

AI governance refers to the policies, processes, and accountability structures that guide how artificial intelligence tools are developed, deployed, and monitored within an organization. In leadership hiring specifically, governance covers everything from how an algorithm scores a resume to how an automated video interview system ranks candidates.

AI governance in hiring requires strong frameworks to ensure ethical use, addressing bias, transparency, and accountability in tools for screening and decision-making. Without these frameworks, organizations risk perpetuating systemic inequities or making legally indefensible selection decisions.

The four core pillars of AI governance in leadership hiring are:

- Ethics: Ensuring AI tools align with organizational values and do not cause harm

- Bias reduction: Identifying and correcting algorithmic patterns that disadvantage protected groups

- Transparency: Making AI decision logic accessible and understandable to HR professionals and candidates

- Accountability: Assigning clear responsibility for AI-driven decisions and their outcomes

These pillars operate differently in AI-driven hiring compared to traditional approaches. The table below highlights key distinctions:

| Dimension | Traditional hiring governance | AI-driven hiring governance |

|---|---|---|

| Decision traceability | Interviewer notes, rubrics | Algorithm logs, audit trails |

| Bias detection | Structured interviews, D&I audits | Automated bias testing, explainability tools |

| Accountability | Hiring manager, HR | Algorithm owner, cross-functional team |

| Transparency | Documented criteria | Model interpretability requirements |

| Speed of review | Periodic | Continuous or staged cycles |

Organizations benefit from accessing membership governance insights that connect them to established governance models used by peer organizations in their sector.

"AI governance is not a compliance checkbox. It is an ongoing operational discipline that must be embedded into every stage of the hiring lifecycle."

For organizations newer to governance strategy, peer mentorship for AI oversight provides structured access to experienced practitioners who have navigated these complexities. Connecting with cross-functional leadership forums also helps talent leaders align governance priorities across HR, legal, and technology functions.

Key governance mechanisms: How organizations ensure ethical AI

Once governance is defined, it is crucial to understand the practical mechanisms organizations can deploy to reduce risk and ensure accountability in AI-driven leadership hiring.

Governance mechanics include cross-functional governance forums, decision-rights frameworks, bias testing covering stability, similarity, and explainability, human override processes, and staged implementation with review cycles. These are not abstract concepts; each one maps to a specific operational practice.

Here is how organizations typically sequence governance implementation:

- Establish a cross-functional governance forum that includes HR, legal, technology, and business leadership. This body owns AI policy, reviews incidents, and sets escalation paths.

- Define decision-rights frameworks that specify which hiring decisions AI can influence, which require human review, and which must remain exclusively human.

- Conduct bias testing at three levels: stability (does the model produce consistent results?), similarity (does it treat comparable candidates equally?), and explainability (can a recruiter explain why a candidate was ranked?).

- Implement human override protocols so recruiters and hiring managers can flag, reject, or modify AI recommendations with documentation.

- Run staged implementation cycles where new AI tools are piloted in limited hiring contexts, reviewed against defined metrics, and only then expanded.

The following table maps governance mechanisms to their primary risk addressed:

| Mechanism | Primary risk addressed |

|---|---|

| Cross-functional forum | Siloed decision-making |

| Decision-rights framework | Unclear accountability |

| Bias testing | Discriminatory outcomes |

| Human override | Over-reliance on automation |

| Staged review cycles | Undetected systemic errors |

Pro Tip: Assign a named governance owner for each AI tool in your hiring stack. Accountability without a named individual defaults to no accountability in practice.

Organizations seeking structured support can explore mentorship for governance or use execsmart governance benchmarks to compare their maturity level against peer organizations in similar industries.

Bias and edge cases: Addressing AI pitfalls in leadership candidate selection

With mechanisms in place, leaders also need to anticipate and react to emerging bias and unique challenges from AI models. The bias risks in leadership hiring are more specific and consequential than most organizations expect.

LLMs like ChatGPT exhibit ordinal bias favoring the first resume in a batch, prestige bias requiring higher cost signals for minority candidates to compete, and asynchronous interviews that deter applicants, with a 50% drop in completion especially among women. Each of these represents a systematic distortion that compounds across thousands of hiring decisions.

Key bias types to monitor in leadership hiring:

- Ordinal bias: AI models consistently favor candidates presented first in a ranked list, regardless of relative merit

- Prestige bias: Candidates from underrepresented groups must signal significantly higher credentials to receive equivalent AI scores compared to majority candidates

- Cost signaling effects: AI systems weight visible markers of investment (advanced degrees, elite employers) in ways that systematically disadvantage non-traditional career paths

- Asynchronous interview deterrence: Video interview tools that require self-recorded responses see substantial drop-off rates, disproportionately among women and candidates unfamiliar with the format

Statistic: AI-enabled asynchronous interviews produce a 50% drop in applicant completion rates, with the steepest declines among women candidates.

These are not hypothetical risks. They are documented outcomes that affect real candidate pools in leadership pipelines. Organizations focused on bias in executive search have documented these patterns and developed targeted counter-measures.

Pro Tip: Rotate candidate presentation order in AI-assisted screening tools and run A/B tests quarterly to identify and correct ordinal bias before it compounds across hiring cycles.

Talent leaders working on diversity bias mitigation benefit from access to peer data on which tool configurations and audit schedules produce the most equitable outcomes at the executive level.

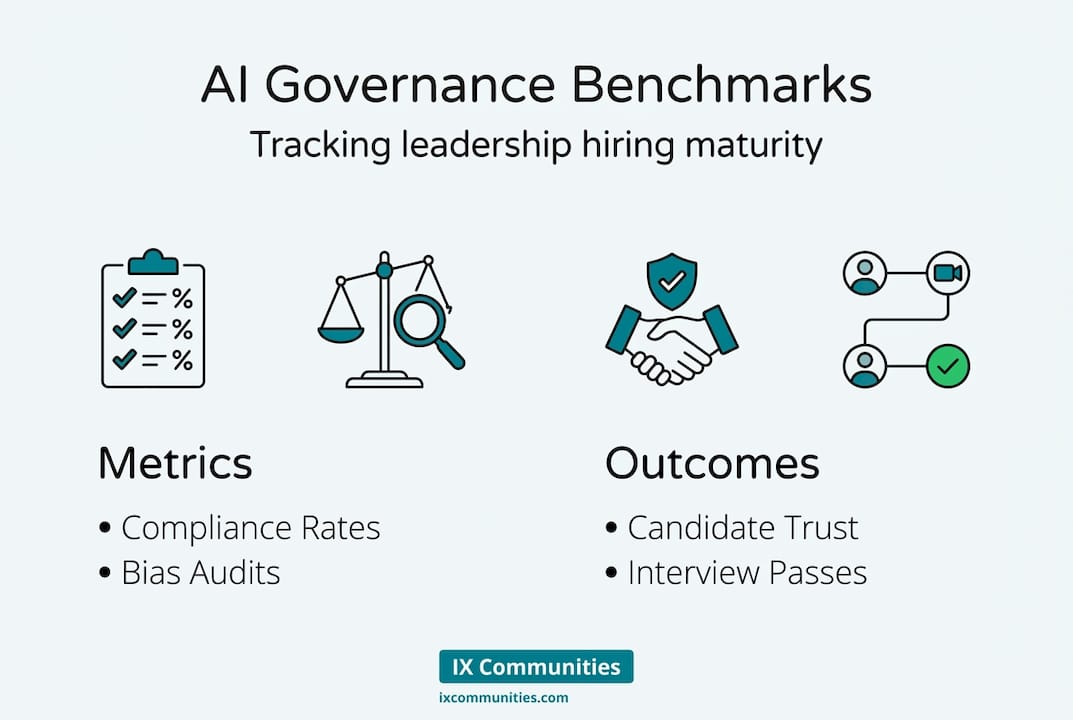

Benchmarks and maturity: Measuring governance and hiring outcomes

Once bias and edge cases are managed, leaders must measure ongoing outcomes and maturity of their governance strategies. Benchmarking provides the data foundation for continuous improvement.

The evidence for AI effectiveness in leadership hiring is clear: AI-selected candidates pass human interviews at 54% versus 34% for traditionally screened resumes, a 60% lift in qualified candidate identification. AI also predicts employment success more accurately than human evaluators in structured comparisons.

However, effectiveness alone does not equal good governance. Adoption outpaces governance in 88% of cases, risking compliance and ethical failures even when AI tools perform well on narrow metrics.

A governance maturity model for leadership hiring typically spans four levels:

- Reactive: AI tools are in use but governance is informal and incident-driven

- Defined: Policies exist but are not consistently applied or audited

- Managed: Cross-functional oversight is active, bias testing is routine, and metrics are tracked

- Optimized: Governance is integrated into enterprise risk management, continuously reviewed, and benchmarked externally

Organizations at the Managed and Optimized levels measure governance through:

| Metric | What it measures |

|---|---|

| Compliance rate | Adherence to defined governance policies |

| Bias audit frequency | How often tools are tested for discriminatory patterns |

| Human override rate | Proportion of AI decisions reviewed or reversed by humans |

| Candidate trust score | Applicant perception of fairness in AI-assisted processes |

| Interview-to-offer conversion | Quality of AI-selected candidate pools over time |

"Governance maturity is not about having more tools. It is about knowing which decisions your AI is making and having the structures to verify them."

Access to leadership hiring benchmarks allows talent leaders to compare their organization's metrics against industry peers. Platforms like ExecSmart provide structured data specifically calibrated for executive hiring outcomes.

What most organizations miss about AI governance in executive hiring

Most organizations treat AI governance as a risk management exercise rather than a capability-building discipline. That distinction matters significantly at the executive hiring level.

General-purpose large language models are not well-suited for leadership candidate evaluation. Domain-specific models outperform general LLMs on equity and accuracy in executive hiring, and established frameworks like the NIST AI Risk Management Framework and the four-fifths rule for disparate impact audits provide practical structure that most HR teams have not yet adopted.

Upskilling HR and talent acquisition professionals on model interpretability is equally important. Talent leaders cannot govern what they cannot read. Familiarity with how AI systems weight inputs, generate scores, and surface candidates is now a core competency for any function responsible for executive selection.

The practical wisdom from peer communities points to three consistent actions that separate mature governance programs from those that stall: regular third-party audits, structured continuous improvement cycles, and active participation in expert peer networks. Connecting with sector-specific mentoring brings leaders into contact with colleagues who have solved the same governance challenges in comparable organizational contexts.

Peer mentoring and resources to advance your AI leadership hiring strategy

Putting these strategies into practice requires more than internal effort. Talent leaders benefit from access to structured peer programs, validated benchmarking tools, and expert-led resources designed specifically for large corporate hiring functions.

IXCommunities, ESIX, and TLIX provide a secure environment where Heads of Talent Acquisition and Chief People Officers can share governance frameworks, compare benchmarking data, and learn from peers who are navigating the same AI governance challenges. The talent leaders peer mentoring program connects members with experienced practitioners for structured guidance on governance maturity. Benchmark surveys offer empirical data to calibrate your organization's progress and identify specific improvement priorities across bias testing, compliance, and hiring outcomes.

Frequently asked questions

What is AI governance in leadership hiring?

AI governance is the set of policies and frameworks ensuring ethical, fair, and transparent use of AI in screening and selecting leaders. Strong governance frameworks address bias, transparency, and accountability in all AI-assisted hiring tools.

How can organizations detect and reduce bias in AI-driven leadership hiring?

Organizations use bias testing, human overrides, and diversity audits to identify and mitigate unfavorable algorithmic patterns. Key mechanisms include explainability requirements, decision-rights frameworks, and staged review cycles.

What metrics should leaders use to assess AI governance maturity?

Review compliance rates, bias audit frequency, human override rates, and candidate trust indicators. AI-selected candidates pass human interviews at a 60% higher rate, providing a baseline for quality benchmarking.

Why is sector-specific AI recommended over general LLMs for executive hiring?

Sector-specific models are better calibrated to leadership competencies and produce more equitable outcomes. Domain-specific tools decrease bias and improve accuracy compared to general-purpose language models in executive selection.

What practical steps can talent leaders take to improve AI governance?

Integrate cross-departmental oversight, conduct regular bias audits, upskill talent teams on model interpretability, and leverage peer mentoring. Centralizing HR oversight and integrating governance into enterprise risk management are foundational steps for mature programs.